Does Transparency About Training Data Change How Users Trust AI?

Studying how transparency about an AI's training data affects how users perceive and trust the system.

Overview

Explainable AI has many ways to show how a model behaves. Knowing how a model behaves helps users follow its logic, catch errors, and decide when to rely on it. But without knowing what the model learned from, that picture is incomplete. This project explores what shifts when training data becomes part of the explanation.

I designed data-centric explanations: descriptions of what an AI model was trained on, written for everyday users rather than technical audiences. Across two sequential user studies, I examined how this form of transparency shapes users' trust and fairness perceptions of AI systems.

I led this project end to end: conceptualizing the data-centric explanation framework, designing and iterating on the prototype across both studies, recruiting and running all 44 participants, and conducting the full data analysis.

The Problem

AI explainability has largely focused on model behavior: how a model arrives at its outputs. The data a model was trained on has received far less attention, even though it shapes everything: what the model knows, what it misses, and where it might fail. Without visibility into training data, users are left to trust an AI system without the information they need to evaluate whether that trust is warranted. This project set out to understand what changes when that information is made available.

Model Explanations

Describe internal logic, feature weights, or decision rules of the model.

Output Explanations

Explain why a specific prediction was made for a given input.

Data-Centric Explanations

Describe the training data: its sources, composition, and characteristics.

This WorkResearch Question

How does transparency about an AI system's training data affect how users perceive and trust the system?

Methods

The project used two sequential studies with different goals and methods. The first was a formative study aimed at understanding how users respond to training data information and what they find meaningful, analyzed through thematic analysis of semi-structured interviews. The second was an evaluative study that tested a refined prototype under controlled conditions, combining post-task questionnaires and post-study interviews to capture both quantitative and qualitative responses.

Semi-Structured Interviews

Used in Study 1 to explore how users interpreted and responded to training data explanations.

Thematic Analysis

Applied to Study 1 transcripts to surface themes around user sense-making of training data provenance.

Post-Task Questionnaires

Used in Study 2 to measure trust, fairness perception, and reliance intentions with validated scales.

Mixed Methods Analysis

Study 2 combined quantitative scale scores with post-study interview data to triangulate findings.

Research Process

Identifying the Gap

A review of XAI literature revealed a consistent blind spot: existing explanation approaches addressed model behavior and outputs, but none surfaced information about what the model was trained on. Yet training data is where a model's assumptions, coverage, and potential biases originate. This gap motivated the core question of the project.

Concept Development

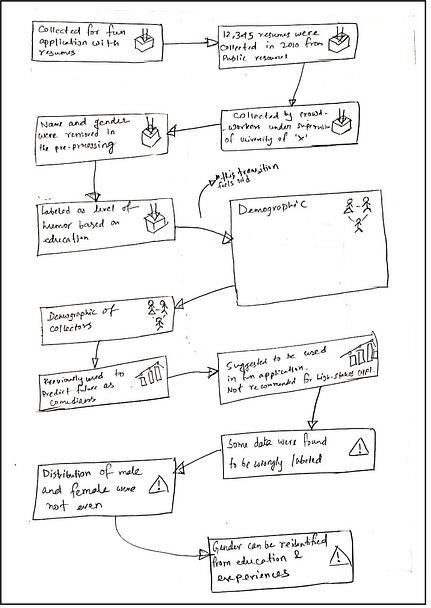

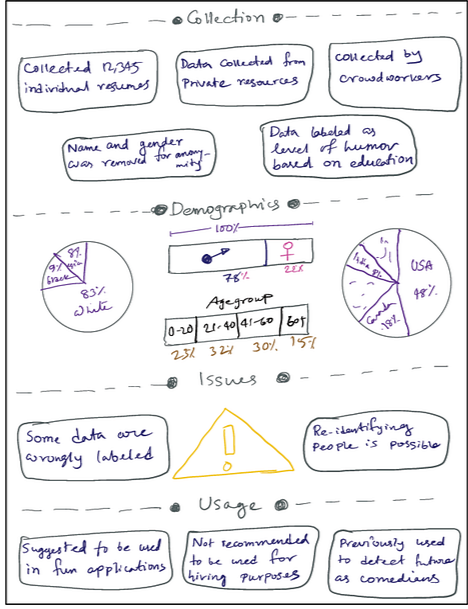

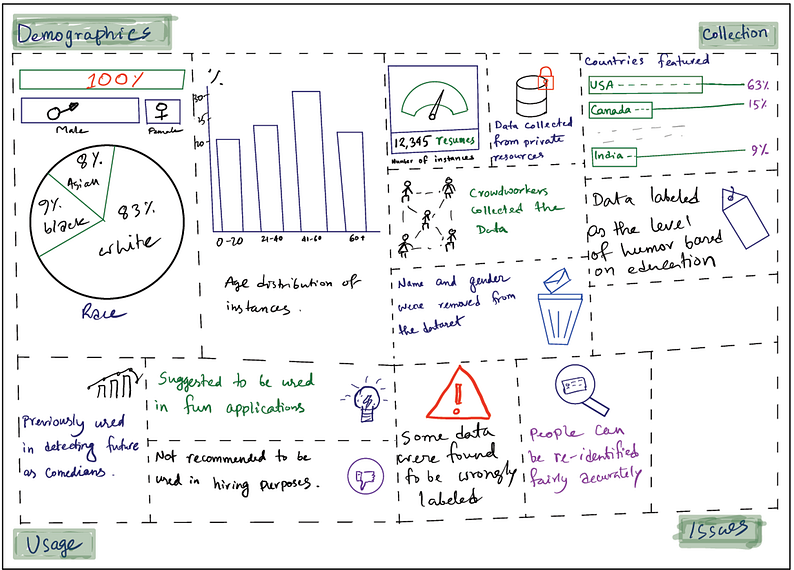

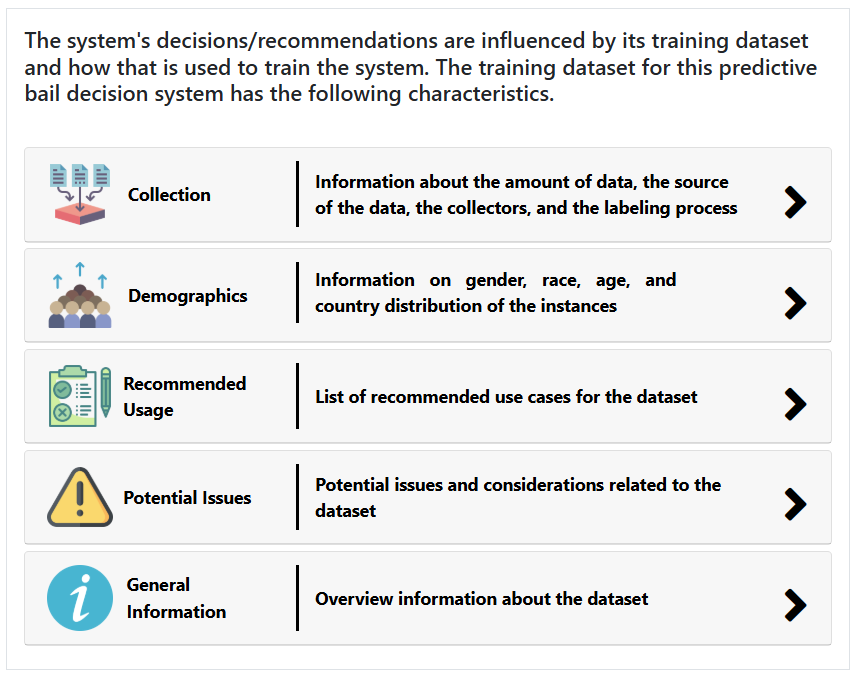

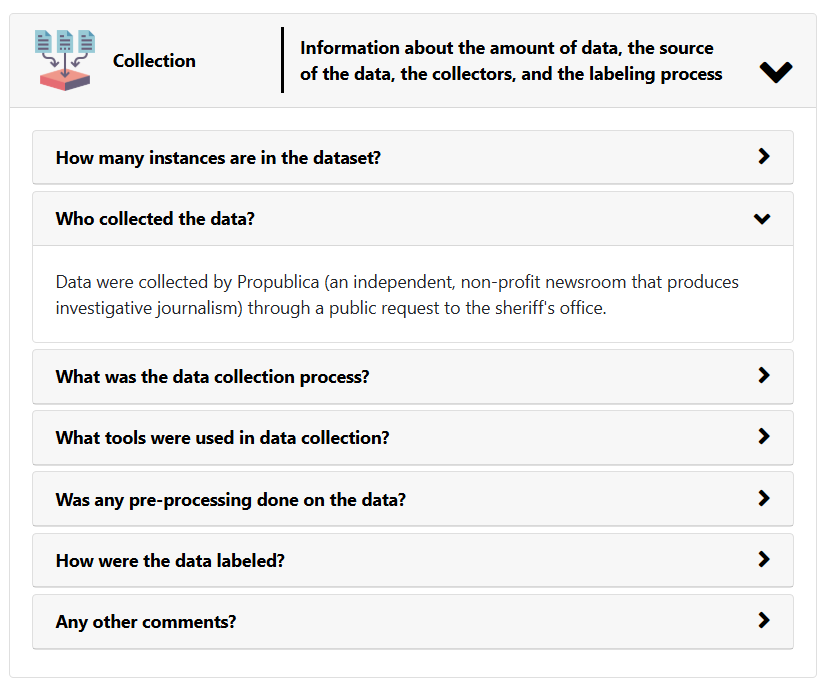

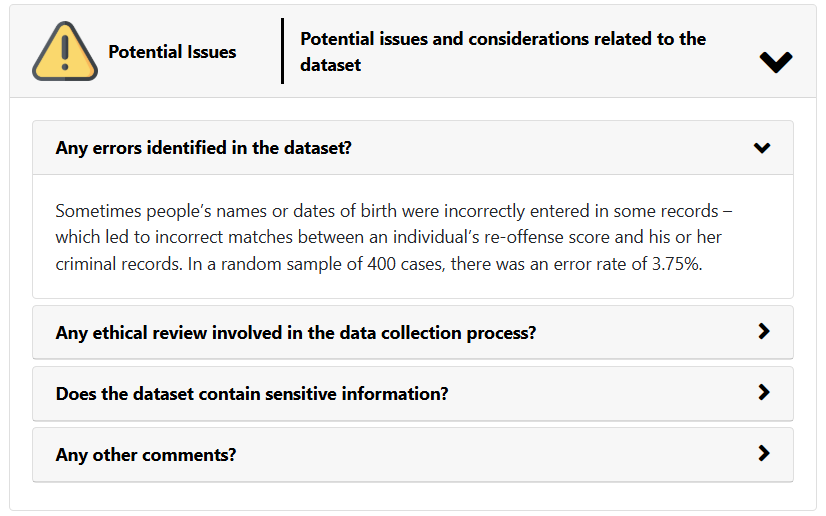

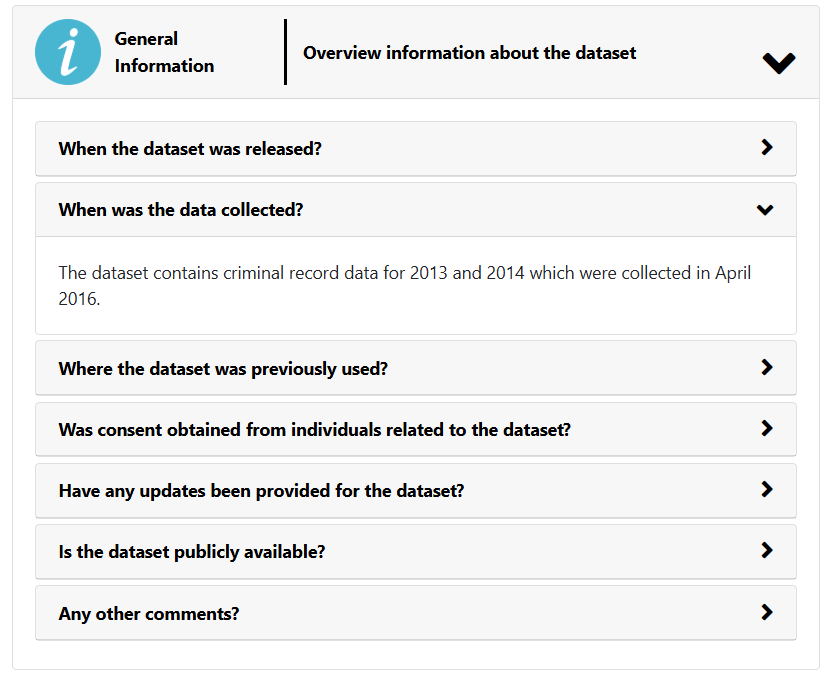

The design process began with Gebru et al.'s datasheets, a documentation format built for ML practitioners. Through iterative piloting of different presentation styles and information subsets, I adapted this into a five-category, question-based format accessible to everyday users.

The goal of Study 1 was to understand what participants generally know about machine learning systems and how they would respond to data-centric explanations. Participants were shown an early prototype and interviewed about their knowledge of ML, their ideas on algorithmic fairness, and their reactions to the explanation format.

- All 17 participants reacted positively to data-centric explanations and were interested in knowing more about training data

- Over half (9/17) were unaware of fairness issues in ML systems, suggesting a significant transparency gap

- The Q&A format was well received; participants felt it helped them focus on what they cared about most

- ML experts raised concerns that some information might be hard for non-experts to interpret, or could prompt unnecessary complaints

- A few participants (3/17) found some answers too shallow and wanted more technical depth

- Added more depth and detail to nearly all answers in the prototype

- Removed low-utility questions (dataset creators, funding source, maintenance) based on usefulness ratings

- Refined language throughout to improve accessibility for non-expert users

Final Prototype

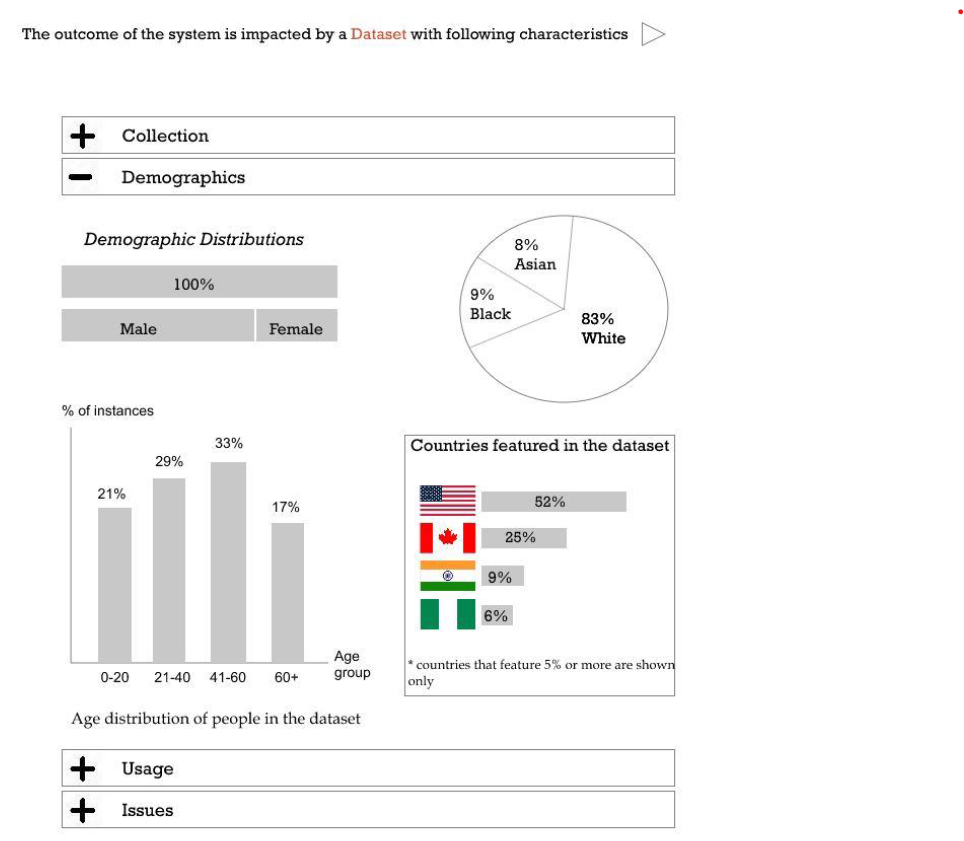

Following Study 1, the prototype was refined and built into a high-fidelity interface used in the evaluative study. It presented training data information across five categories using a question-based format, allowing users to explore at their own pace.

Study 2

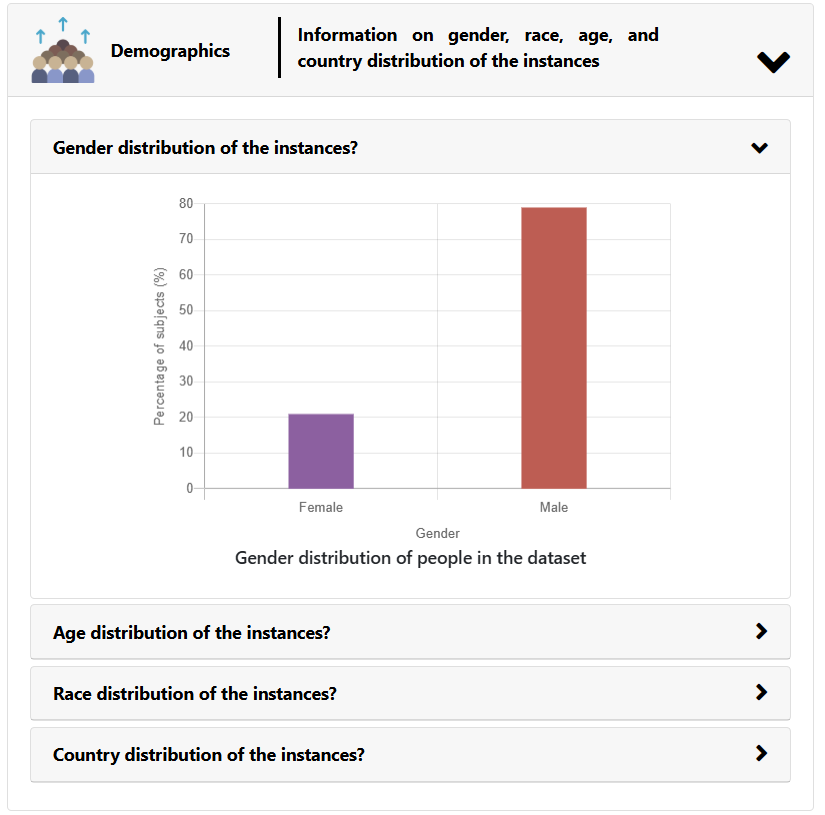

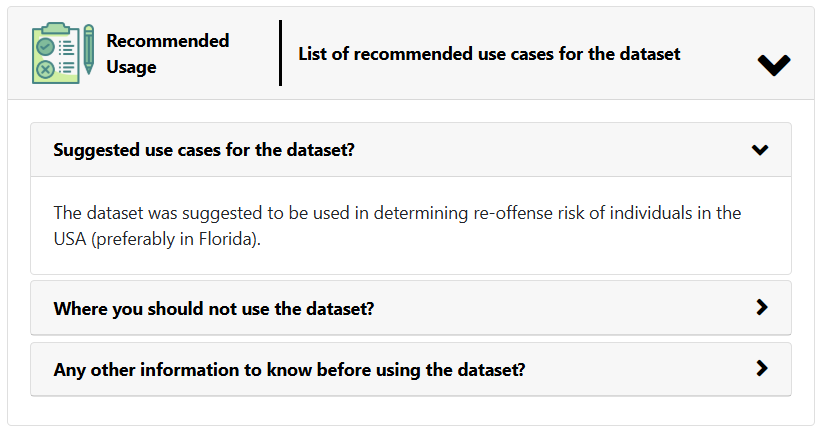

The second study investigated how data-centric explanations impact trust and fairness judgments across a range of AI scenarios and training data characteristics. Participants (9 ML experts, 8 intermediates, 10 beginners) interacted with four real-world scenarios: predictive bail decisions, facial expression recognition, automated admission decisions, and automated speech recognition. Two scenarios showed balanced training data; two revealed clear red flags such as demographic imbalances and high error rates. After reviewing each explanation, participants completed validated Likert-scale questionnaires on trust, fairness, and comfort, followed by a 40-60 minute semi-structured interview.

- Trust, fairness, and comfort were all significantly lower when explanations revealed red-flag training data (p < .001 for trust and fairness)

- Perceived utility of the explanations remained consistently high regardless of data quality: participants rated the explanations highly for helping them understand and reflect on the training process

- Expertise did not significantly affect any questionnaire measure; beginners and experts responded similarly to the explanations

- Demographics was the most influential category: two-thirds of participants cited it as the most useful, and participants across expertise levels were able to identify potential biases from the visualizations

- High-stakes scenarios (bail decisions, admissions) prompted deeper engagement; most participants (22/27) felt explanations were less necessary for low-stakes systems

- Explanations helped most participants (21/27) assess fairness to at least some extent, though some experts wanted additional information on decision factors

Key Insights

Training data transparency produces calibrated, not inflated, trust

Trust moved in the direction the data warranted: up when explanations showed balanced, well-documented training data, and down when they surfaced red flags like demographic imbalances or high error rates. Participants were motivated, capable auditors when given the right information.

Data-centric explanations support fairness reasoning, but not fully

Demographics was the most influential category: two-thirds of participants cited it as the most useful, and people across all expertise levels identified potential biases from the visualizations. Most participants (21/27) felt the explanations helped them assess fairness, though some experts also wanted information on the decision process.

Non-experts were more capable than ML practitioners expected

Some ML-experienced participants worried the explanations would be too complex for non-experts. But beginners engaged with the information just as effectively as intermediates and experts, and no non-expert reported difficulty understanding the content.

High stakes amplify the value of transparency

Participants engaged most carefully with explanations in scenarios involving bail decisions and admissions, where the consequences of biased systems are most severe. Most felt explanations were less necessary for low-stakes systems like social media recommendations.

Design Implications

Core Insight

Transparency is not just about explaining decisions. It is about giving people the context they need to decide how much to trust those decisions in the first place.

- Make training data a first-class transparency artifact. Data-centric explanations should sit alongside outcome-level explanations, not replace them. Users can reason about data quality and demographics on their own terms; they do not need to understand a model's internals to form a meaningful judgment.

- Prioritize demographics and collection in the explanation design. These two categories drove the most trust and fairness reasoning. Explanation designers should lead with what matters most to users, not what is easiest to extract from a model.

- Design for calibrated trust, not maximum trust. An explanation that lowers trust by revealing problems is not a failed design; it is working as intended. The goal is informed judgment, not reassurance.

- Do not underestimate non-expert users. The assumption that training data information will overwhelm general audiences was not supported. Explanation designers should test accessibility with real users rather than relying on expert intuitions about what non-experts can handle.

Impact

This work established training data transparency as a recognized direction in XAI research and directly motivated the three follow-on studies in this portfolio. It was published at ACM CHI 2021.